From many years, Since the birth of multi-core computing, there has been a need for parallel-programming architecture. But now, the multi-core computing has become the prevailing paradigm in computer architecture due to significant improvement in the trend of multi core-processors.

Very recently, Microsoft released the beta versions of dot Net Framework 4.0 and Visual Studio 2010. The all eyes fell on.NET 4, yet the labels boasted the advent of parallel-programming.

The next question, yet to be answered is, whether there are any advantages (more specifically towards aiding normal traditional programmer and performance regarding multi-threading) on migrating from existing APIs?

My thoughts about dot net 4.0,..

1) Multi-Core processing ability:

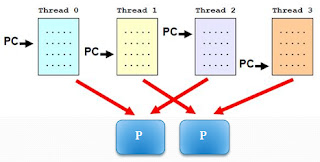

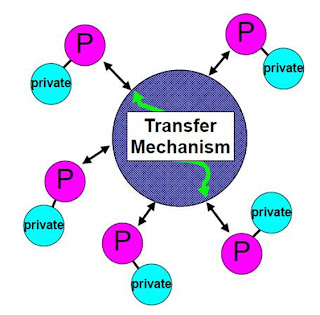

Visual Studio along with dot net 4.0 now has significantly improved the Parallel Extensions, which might help migrating normal programmers onto multi-core computing(distributed computing). The way Microsoft has organised the support for the Framework is that, it has four broad areas like library, LINQ, data structures and diagonastic tools..NET 4's peers and predecessors lacked the multi-core operable ability. The main criteria like communication and synchronization of sub-tasks were considered as the biggest obstacles in getting a good parallel program performance. But in Dot Net 4.0, it has promising parallel library technology enables developers to define simultaneous, asynchronous tasks without having to work with threads, locks, or the thread pool and without having all other burdens. This helps in programmer concentrating more on the logic of the application rather than spending most of his/her time on those tasks listed previously.

2)Complete support for multiple programming languages and compilers:

Apart from VB & C# languages,.NET 4 establishes full support for programming languages like Ironpython, Ironruby, F# and other similar.NET compilers. Unlike 3.5, it encompasses both functional-programming and imperative object-oriented programming.

3)Parallel-diagnostics:

Unlike Visual Studio 2008, the new Visual Studio 2010 supports debugging and profiling, extensively. This helps programmer in debugging, hence this was very much expected improvement in Visual Studio 2010 from most users of Visual Studio 2008 programmers. The new profiling tools provides various data views which displays graphical, tabular and numerical information about how a parallel or multiple-threaded application interacts with itself and with other programs, thus programmer can now know how the application is actually working. The results enable developers to quickly identify areas of concern, and helps in navigating from points on the displays to call stacks & source codes.

4)Dynamic language runtime:

Addition of the dynamic language runtime (DLR) is a boon to.NET beginners. Using this new DLR runtime environment, developers can add a set of services for dynamic languages to the CLR. In addition to that, the DLR makes it simpler and easier to develop dynamic languages and to add dynamic features to statically typed languages. A new System Dynamic name space has been added to the.NET Framework on supporting the DLR and several new classes supporting the.NET Framework infrastructure are added to the System

5)Runtime Compiler Services.

Anyway, the new DLR provides the following advantages to developers:

Developers can use rapid feedback loop which lets them enter various statements and execute them to see the results almost immediately.

Support for both top-down and more traditional bottom-up development. For instance, when a developer uses a top-down approach, he can call-out functions that are not yet implemented and then add them when needed.

Easier refactoring and code modifications (Developers do not have to change static type declarations throughout the code)

If you think only parallel programming abilities and promising capabilities make the MS.NET 4.0 a more promising next generation programming tool, think again! That's not all. There are also a number of enhancements to the Base Libraries for things like collections, reflection, data structures, handling, threading and lots of new features for the web.Apart from Microsoft, there are other Open Source communities working continuously towards bringing the make-over in current traditional(non-parallel) programming trend.

Very recently, Microsoft released the beta versions of dot Net Framework 4.0 and Visual Studio 2010. The all eyes fell on.NET 4, yet the labels boasted the advent of parallel-programming.

The next question, yet to be answered is, whether there are any advantages (more specifically towards aiding normal traditional programmer and performance regarding multi-threading) on migrating from existing APIs?

My thoughts about dot net 4.0,..

1) Multi-Core processing ability:

Visual Studio along with dot net 4.0 now has significantly improved the Parallel Extensions, which might help migrating normal programmers onto multi-core computing(distributed computing). The way Microsoft has organised the support for the Framework is that, it has four broad areas like library, LINQ, data structures and diagonastic tools..NET 4's peers and predecessors lacked the multi-core operable ability. The main criteria like communication and synchronization of sub-tasks were considered as the biggest obstacles in getting a good parallel program performance. But in Dot Net 4.0, it has promising parallel library technology enables developers to define simultaneous, asynchronous tasks without having to work with threads, locks, or the thread pool and without having all other burdens. This helps in programmer concentrating more on the logic of the application rather than spending most of his/her time on those tasks listed previously.

2)Complete support for multiple programming languages and compilers:

Apart from VB & C# languages,.NET 4 establishes full support for programming languages like Ironpython, Ironruby, F# and other similar.NET compilers. Unlike 3.5, it encompasses both functional-programming and imperative object-oriented programming.

3)Parallel-diagnostics:

Unlike Visual Studio 2008, the new Visual Studio 2010 supports debugging and profiling, extensively. This helps programmer in debugging, hence this was very much expected improvement in Visual Studio 2010 from most users of Visual Studio 2008 programmers. The new profiling tools provides various data views which displays graphical, tabular and numerical information about how a parallel or multiple-threaded application interacts with itself and with other programs, thus programmer can now know how the application is actually working. The results enable developers to quickly identify areas of concern, and helps in navigating from points on the displays to call stacks & source codes.

4)Dynamic language runtime:

Addition of the dynamic language runtime (DLR) is a boon to.NET beginners. Using this new DLR runtime environment, developers can add a set of services for dynamic languages to the CLR. In addition to that, the DLR makes it simpler and easier to develop dynamic languages and to add dynamic features to statically typed languages. A new System Dynamic name space has been added to the.NET Framework on supporting the DLR and several new classes supporting the.NET Framework infrastructure are added to the System

5)Runtime Compiler Services.

Anyway, the new DLR provides the following advantages to developers:

Developers can use rapid feedback loop which lets them enter various statements and execute them to see the results almost immediately.

Support for both top-down and more traditional bottom-up development. For instance, when a developer uses a top-down approach, he can call-out functions that are not yet implemented and then add them when needed.

Easier refactoring and code modifications (Developers do not have to change static type declarations throughout the code)

If you think only parallel programming abilities and promising capabilities make the MS.NET 4.0 a more promising next generation programming tool, think again! That's not all. There are also a number of enhancements to the Base Libraries for things like collections, reflection, data structures, handling, threading and lots of new features for the web.Apart from Microsoft, there are other Open Source communities working continuously towards bringing the make-over in current traditional(non-parallel) programming trend.